|

Ten tips to make Storyline courses more accessible:

Fall forward and start now. Doing this retrospectively was a bit painful. Now we know what to do, it will just be part of our builds moving forward.

0 Comments

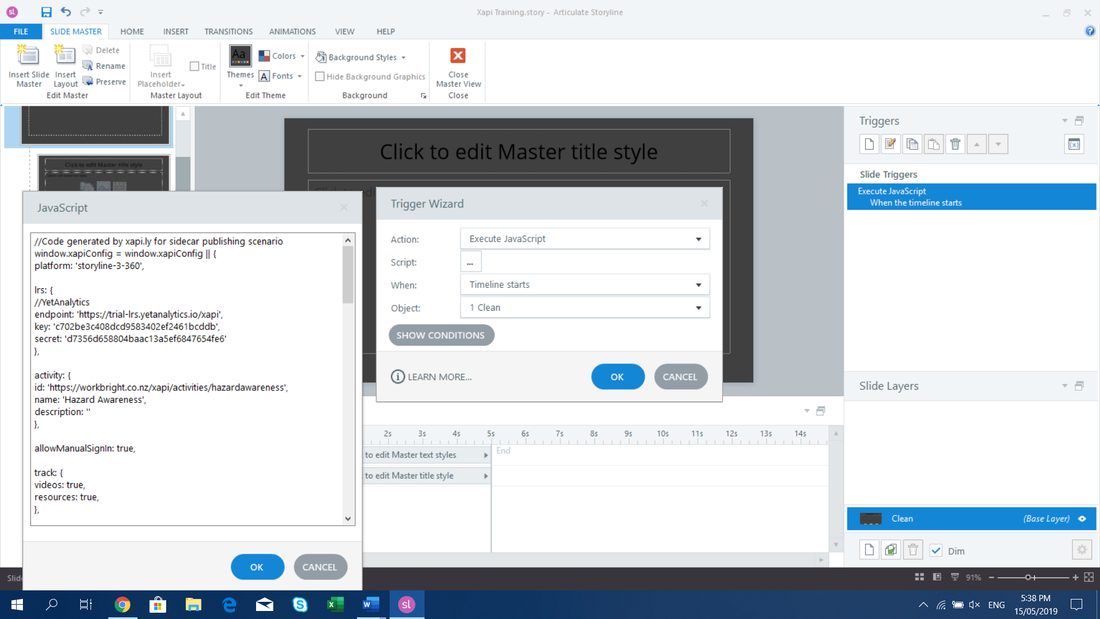

As part of my presentation to iDesignx, I will be sharing how we did the javascript for one of our clients. I have attached the file below for you to view, and download if you like. We used javascript to do the following:

This was a way to solve the problem of having no LMS, having tens of thousands of possible learners, and certainly no budget to buy one that size. For now I will simple add the javascript file, but I will come back soon and talk about this in more detail. Due to a flyer not turning up on time, I may also add here the IDEA Academy flyer that was meant to be in the iDesignx bags. About five years ago, I started saying to anyone who would listen that there aren't enough courses on Instructional Design. I myself was taking the Open Polytechnic course on Elearning and i was going through the frustration of having to take everything they taught me about using elearning in a tertiary setting and trying to apply it to corporate rapid elearning. For someone who still felt new and inexperienced, that was a challenge.

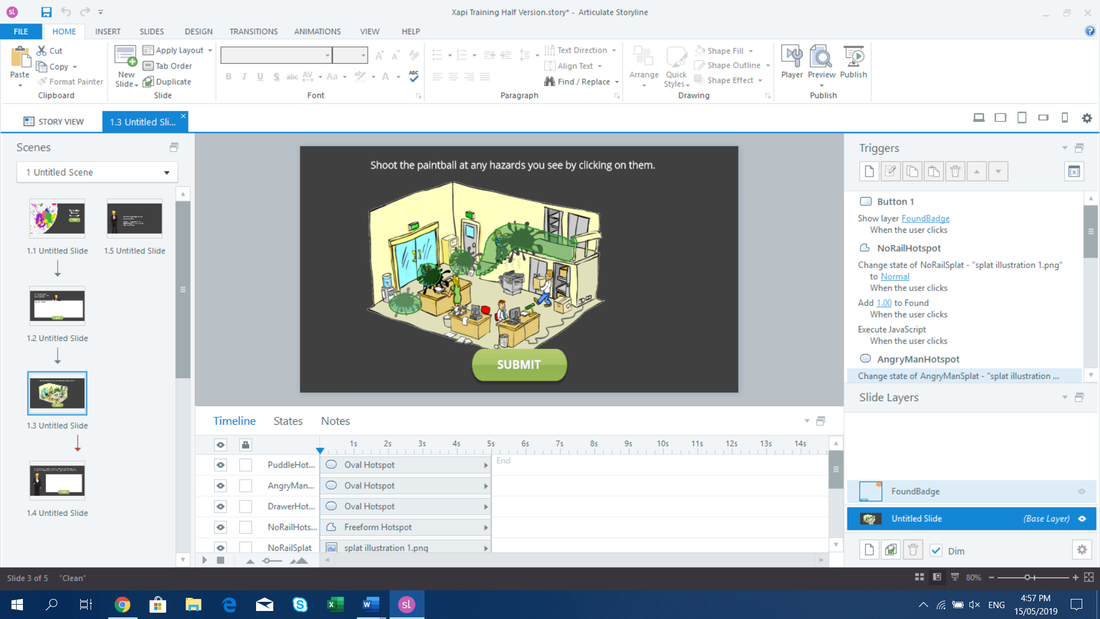

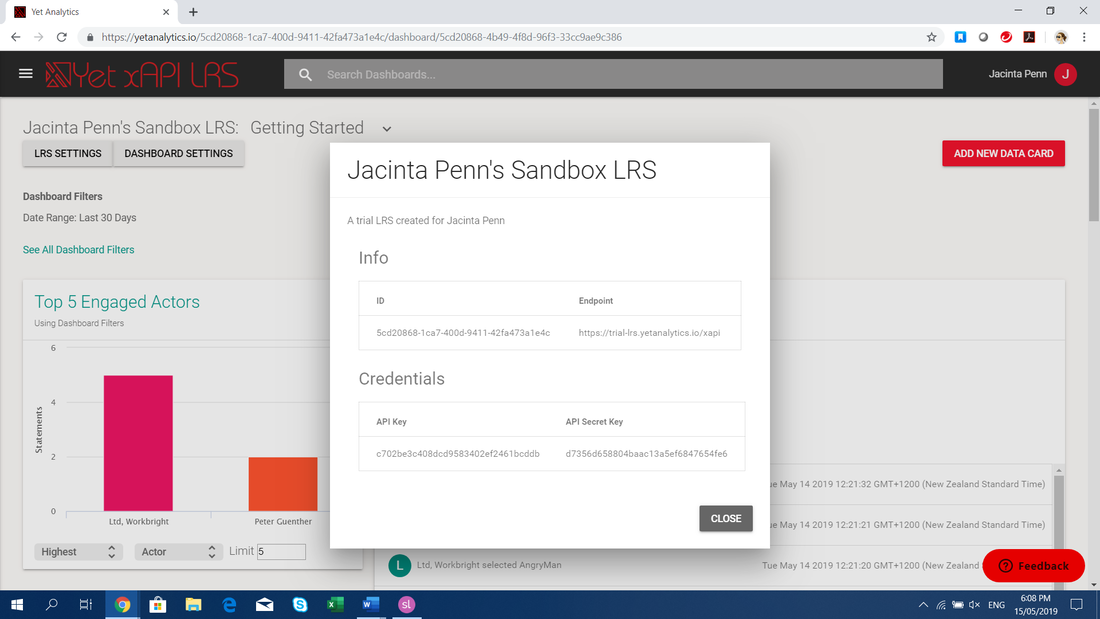

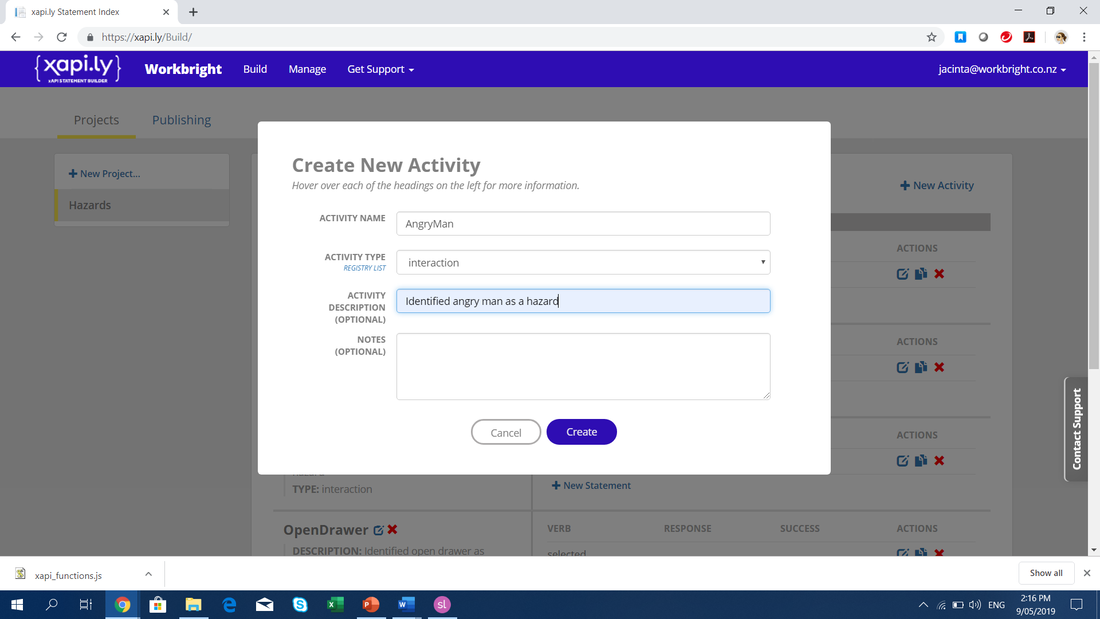

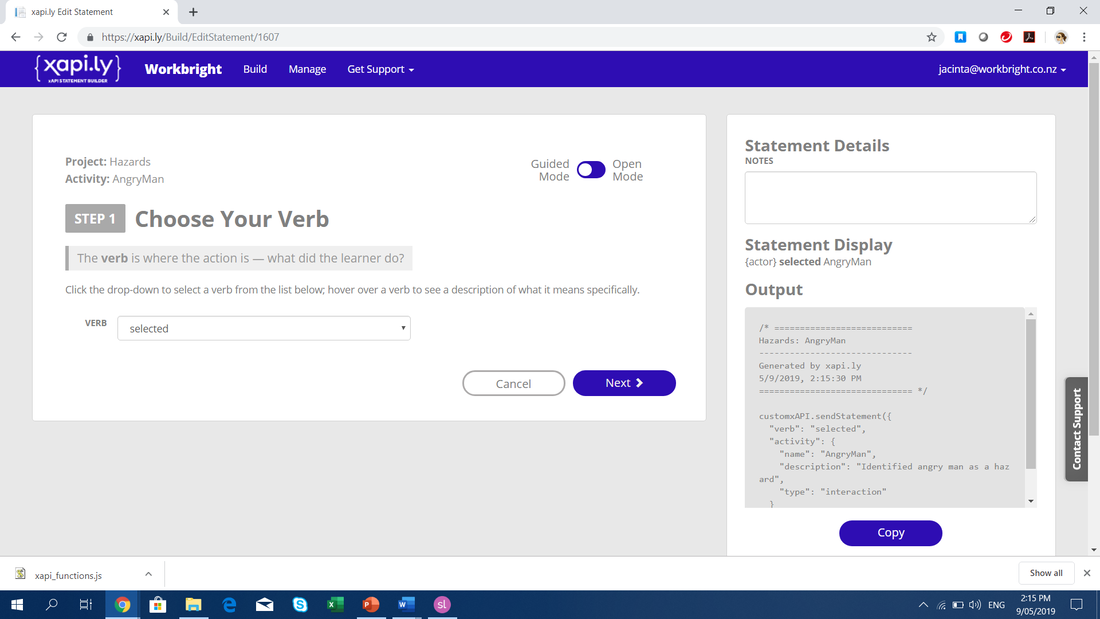

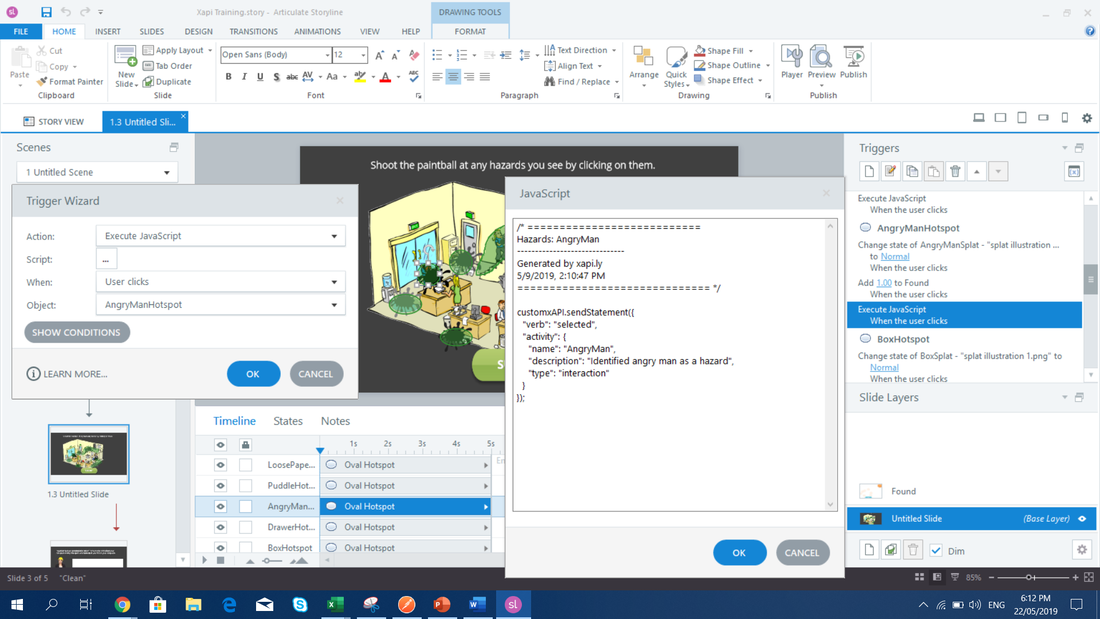

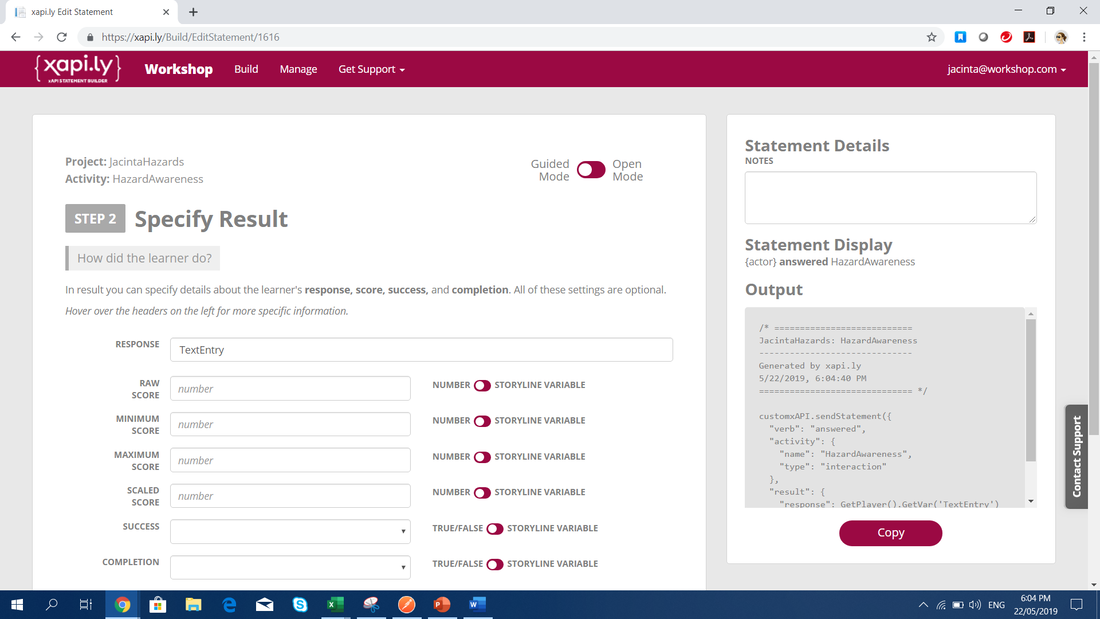

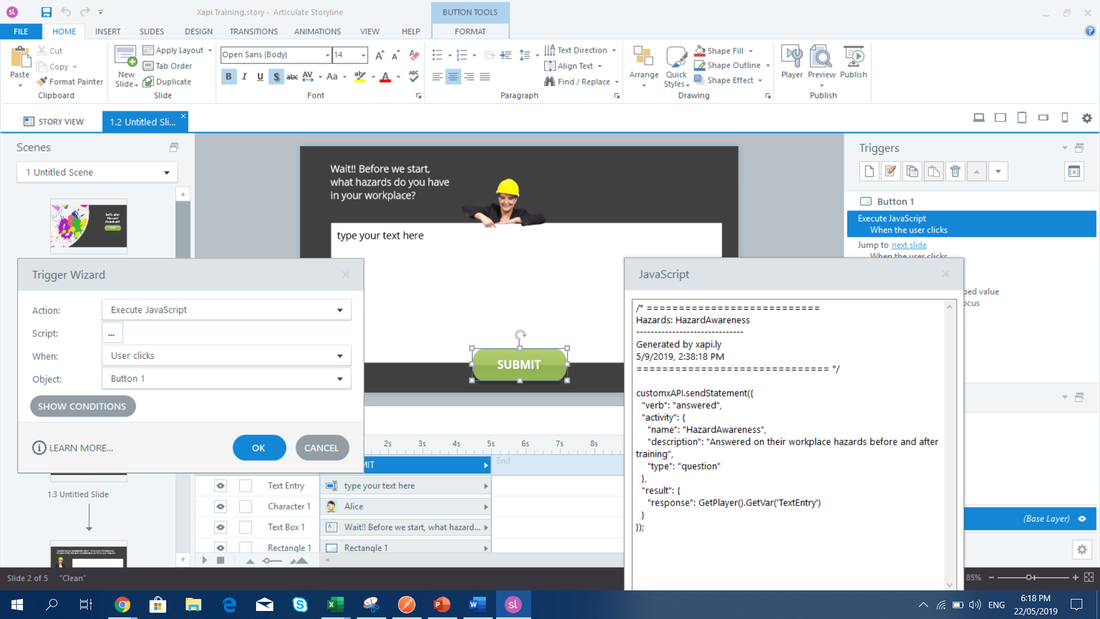

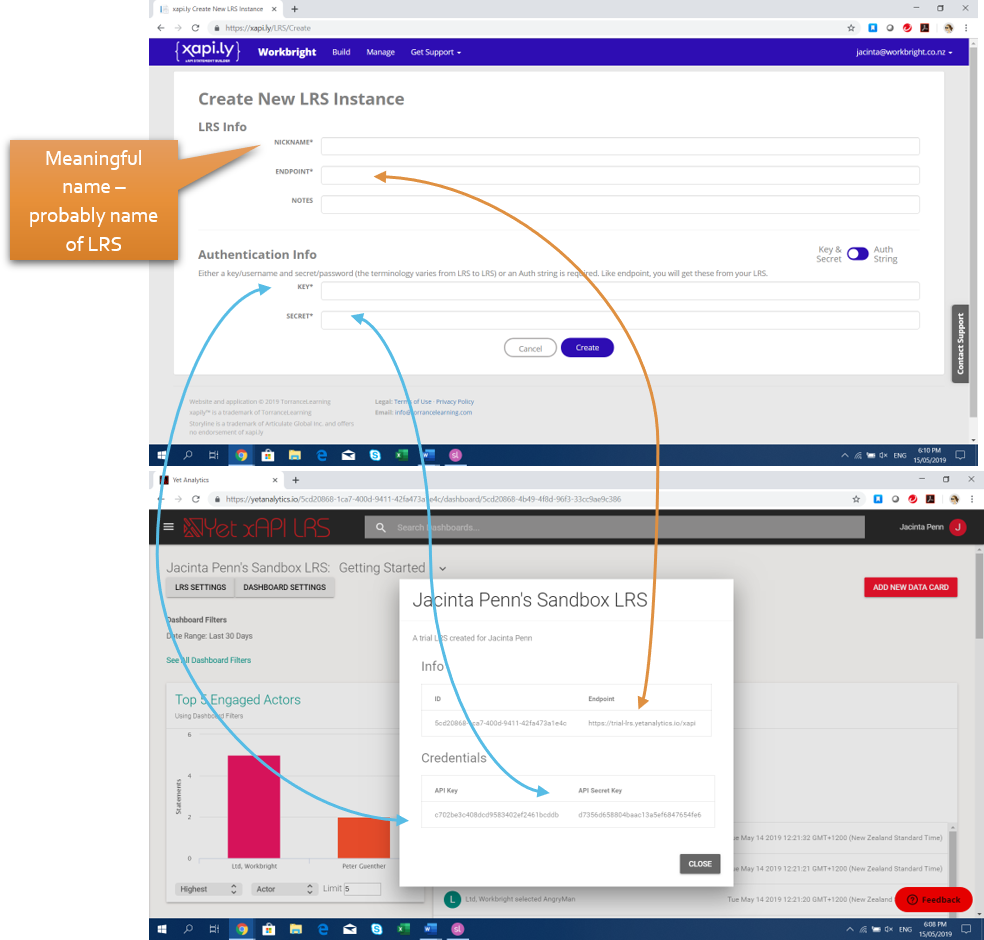

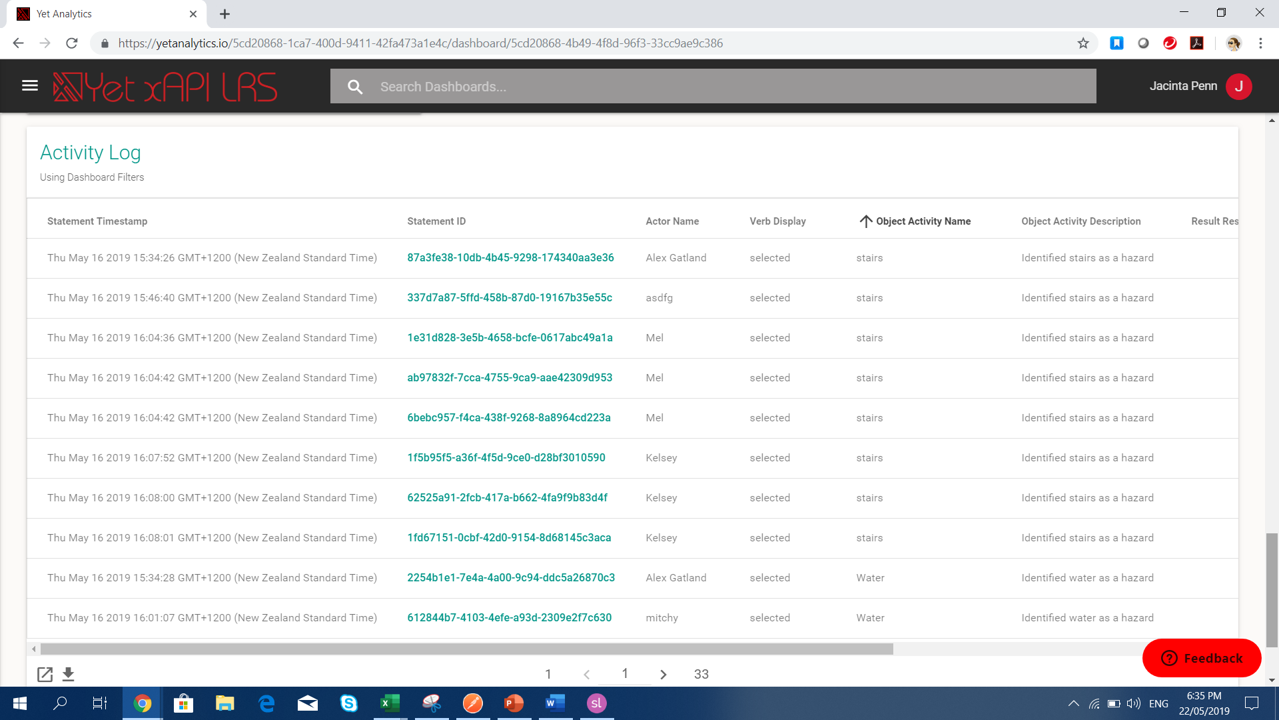

Then, as the owner of a small business, I needed to hire or subcontract other instructional designers. The applications and even results I got were varied. I was shocked at the powerpoint styles, the inconsistent text formats, the heavy content - some of which we had to totally redo before the client saw it. On the other hand I hired others who could create a thing of beauty but needed me to deal with the client to get out the essential information. I found out that it was a common problem for the elearning to be content based - actually literally, the policy content put online. (Please note even I have done this in the past - mea culpa). And this was putting managers off - elearning content was boring, compliance based and often ineffective - elearning was getting a bad name. I realised that my background in IT sales had actually given me a head start - I already knew how to elicit the information I needed to figure out the solution they needed. What I found natural at this point was actually a learned skill - I had just learned it in another industry. I also saw how keen everyone was to learn more just as I did - attending conferences, events, reading blogs. So I decided to do something about it. After a few years of gathering content, in between working to pay the bills and with help from a few friends (Anna Kingston, Michelle Childs, Miranda Verswijvelen and without doubt the NZATD influence), the IDEA Academy is finally live. The idea is to add courses every year until we are covering the gamut of learning that a new Instructional Designer or Elearning Developer might want to know. This year its Instructional Design for Elearning, next year its UX and Graphic Design, then Scenario Design and Learning Activities with both a design and a development thread. This Academy is not designed as a profit making venture (although wouldn't that be a nice surprise); its designed to help the industry set new standards, keep improving and give elearning back its good name. The course is done in Moodle and will take about 2-4 hours of study each week for 10 weeks. It includes a case study, examples of elearning, learning theory and models of application and project based assessments. In addition it contains a heavy smattering of social learning, not only allowing learners to share their experience and learn from others, but teaching them how to stop and reflect on their design and application. It focuses more on rapid learning but also has some broad coverage of other tools and when to use them. Learn more at the following link https://www.workbright.co.nz/idea.html Recently I was asked to fill in at a conference workshop on xAPI - I had to learn xAPI and then work out how to teach it in 2 weeks. To be able to teach a varied level of learners in a 2 hour window, I looked to the xapi.ly tool. For the first time, I feel like I really understand the potential of xAPI. (Nothing like a deadline to motivate you). Follow these steps to learn xAPI - I really believe that the only way you can truly understand its potential is to build with it. 1) Create a short elearning module with an input box and a submit button and a second slide with a hotspot interaction. 2) Create a sandbox trial with YetAnalytics and open the Settings, Info window. This gives you the important information you need for your xapi statement; the endpoint, key and secret key. 3) Create an account with xapi.ly Although this incurs a cost of $300US, its well worth it to understand xapi properly. Start a project named for your elearning module. Create an activity - name it after the object you are wanting data on. e.g. If you created a hotspot on an angry customer, call the activity Angry Man. Then add a statement, using a verb like Selected. Don't fill in any of the other fields. Copy the code in the box to the right. Return to Storyline and add a trigger to execute javascript using the code when the hotspot is clicked. In xapi.ly add another Activity named for the Input data you are wanting to see e.g. Hazard List. Add a statement with the verb commented. This time in the Response field, put the variable name of the text input box. Copy the code. Return to Storyline and add a trigger to execute javascript when a Submit button is clicked. In xapi.ly click on the Publish tab. Enter an LRS instance called YetAnalytics, using the info on the YetAnalytics info page. Choose the standalone option. Select your LRS instance from the dropdown. For an activity ID use a URL address that no-one is likely to use - this is the unique identifier for the xapi data. For instance https://yourwebsite.com/xapi/hazardtest/ Then generate the code. Copy this and paste it into a javascript trigger on your master slide. This ensures that the xapi statements will be sent to the right place with every slide. 4) Publish your course to Review 360 and try it a few times. Then check your Yet Analytics account. Data will start to appear. To see the input data, add a column to the list or export the data. What this meansHaving the ability to send xapi statements with a Storyline trigger means that we can now collect all sorts of data about our learning. We can see what people do with the resources we provide, which way they go first, which answers they know and which they miss and even collecting input from the learners.

But more than this, we can also use query statements, so we can get information from the LRS and then show it in the learning. So we can create leaderboards, show poll results, change Storyline variables and make truely interactive experiences that change over time or draw from real databases. This is more complicated but can provide advanced experiences worthy of consideration. Basically it means the world of data is our oyster. Have a play and consider the possibilities. So often the multiple choice quiz can be a last minute add-on to the rest of the elearning design. As with all elearning, the more thought put into the design, the better learning (or assessment) you will achieve. Recently I read an article on How to Prepare Better Multiple-Choice Test Items by Steven Burton. I thought I would summarize here as well as providing the PDF file below. This was their summary of weaknesses in many mutiple choice answers:

Firstly they covered when you should use multiple choice instead of a free text answer. Obviously its easier for marking purposes, but their main point was that multiple choice is when people can select an answer to prove their knowledge rather than having to articulate their own understanding, viewpoint or skill. They called this when an answer may be 'selected' over when an answer must be 'supplied'. They pointed out that the more questions you have the less chance they have of guessing all the answers randomly. 10 was a great number surpisingly with only a 1 in 64 chance of guessing 70% of the answers correctly. 15 or 20 is even better. Then they provided the following 16 point checklist:

They also particularly delved into point 8 on distractors.

I do strongly recommend grabbing a coffee, finding a quiet place and reading through their whole article when you have a moment. For those of us looking to provide excellence in instructional design this type of small targeted improvement can really elevate our work.

Recently I presented at iDesignX to share what we had learnt about designing in VR. As I've discussed previously most of our learning comes from mistakes and from spending time using other Virtual Reality apps. Here is a summary:

1) Make sure it’s 3D suitable. Should it be in VR? If your learning needs people to memorise text, read policies etc, it shouldn’t be in VR. If your training is about a 3D environment or practicing what policies mean in real life, it can be in VR. That might be hazards, introducing them to a new environment, retail sales, leadership, sexual harassment. If your training is about giving people embodied experience, it absolutely should be in VR or on the job. Forklift driving, crane driving, mining, fire extinguishing, foresty, 2) Use good instructional design with action mapping. Plan your experience with action mapping, or similar problem solving techniques. Plan an experience or a story that will have them practice and become comfortable in that role or task. 3) Make it easy to use. VR is new. People are unfamiliar with it. Make the experience as easy to use as possible. Have an avatar helper or an easy to find menu, have a tutorial for how to move around or grab items. 4) Remember it is 360 degrees. They may not always be looking the way you want, or in the right area for the learning. Use sound or pointers to send them in the right general direction. 5) Lots and lots of feedback and hints. Make things glow if you can pick them up, or enlarge when you look at options that you can select. Have an avatar give feedback with audio, instead of text saying “Correct”. People respond to emotions on faces – so have a smiling nodding avatar say well done. Or a slight frown saying “I’m afraid that would cause a problem. Maybe you should try something else?” (Or clutching their head saying “No what have you done?! The engine’s going to blow” – trust me, that they will remember!). This may challenge your thinking – design for the kind of feedback they might get in real life rather than just a “No”. 6) Show progress. Often. Make sure actions and challenges will happen in a timely manner without big gaps – in VR, these can seem endless. Put obstacles fairly close together so they aren’t left wandering around wondering if there is anything else to find. And if they just can’t find that last hazard, start giving them hints… plus tell them how many they have to go. 7) Make sure its accurate to real life. They are going to learn this in a part of their brain which directs their body movements. If you teach them on a forklift trainer which has a foot pedal, when your actual brand of forklift uses a hand lever, they will constantly be pedalling the floor while relearning again. Don’t skimp on these kind of details – pay for the change. 8) Avoid nausea Either allow them to stay in one place or use teleportation for movement. Large numbers of users will experience nausea when you use a normal walking movement. On driving machines, keep the speed slow and blur the periphery to avoid nausea while driving. Additionally better headsets with better frame rates working off faster computers will be smoother and better for brain processing. 9) Have a non VR option Some people just can’t use VR – they don’t have binocular vision, its quite common. Others have thick glasses that aren’t comfortable in a headset (some are fine). Always publish in a way that can also be viewed on a desktop or mobile. WebVR is great for this. Unity can publish to lots of options including webvr and it can be scorm wrapped too. Publishing a 360 video on YouTube means you can play it naturally or select the mobile headset option. 3D animations can be created with an app that has a choice of phone or headset view. 10) Don’t mix graphic styles If you have a low to medium poly 3d environment made by a graphic designer, don’t use a high poly photorealistic 3d object. This will jar the brain and remind them its not real. It is better to have low poly environment and low poly objects, or all photo realistic, than to mix. But low poly will play much better on mobile and thin networks and is just as effective on learning. So get out there and experiment in some of the new authoring tools - try out everything you can at expos and stores, so you can start to imagine how VR can be a great solution to some of your training problems. As elearning professionals we always aspire to create great learning - it needs to be engaging, easy to understand, and easy to apply in the workplace. To do this we have trained ourselves on all the current learning theories: Blooms taxonomy, Cathy Moore's action mapping, Malcolm Knowles Pedagogy, Social Learning theory etc and we try to use these in our work, to make it work. One theory is that of cognitive overload: John Sweller theorised that instructional design can help to reduce cognitive load when problem solving (Cognitive load being the mental effort required within the brain's memory to solve the problem). Heavy cognitive load can have an effect on task completion i.e. if it's too complicated and you need to remember too much at one time, you are unlikely to get the job done right! Embodied Cognition is a new theory where cognition is the connection between the body and the mind. It states that not just our mind shapes our world view, but also our body, and the experiences it has, lead to learning activities in the mind. This is of course yet another reason where virtual reality is going to become the gold standard of training - where not just your mind but your body can experience ducking a hazard, setting up a machine, driving a crane etc. Or you have learned about the theory of fertilisation and low motility and then experience a VR of a sperm swimming up the canal racing the others to get to the egg but running out of energy just before the goal, every time. Then a VR of getting to the egg in the IVF setting - bingo easy! How much more memorable would that be? (Sigh, really worried about the comments I could get for this example, please keep it clean people!) So we have this new tool that enables people to cement their learning in the part of the brain where they USE knowledge, rather than STORE knowledge, and it can make our learning so powerful. However, with great power comes great responsibility. And as VR designers, we need to take into account the issues of cognitive overload for our learners in VR. For they are quite likely in a very new setting, not just a new virtual environment but also not having used controllers before, or even VR at all. They won't know what to do, and may be overwhelmed by being in a 360 setting. Additionally, we do find some people don't turn around and look at all, and stand with feet frozen, while others look around wildly. These reactions need to be taken into account as does our design planning for the learning. Let's make it easy for our learners - make the challenge about the learning, not figuring out HOW to learn. Here are our tips:

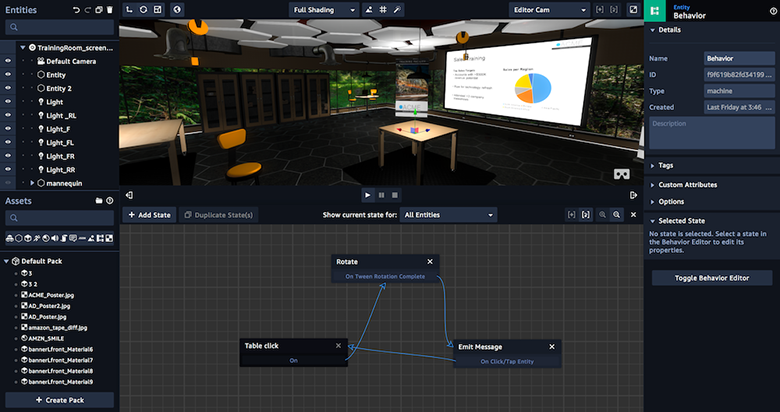

Overall our best advice is keep it simple, and make it easy. If you have other ideas on how to reduce cognitive load in VR we welcome your comments below or on twitter. We hope this has been helpful for you in considering your Virtual Reality training project. If you have some ideas on some VR training and would like to discuss with us how they could be done, please get in touch. We are able to work globally. Jacinta Penn [email protected] For more reading on this topic digitalculturist.com/embodied-cognition-and-the-possibility-of-virtual-reality-ca2ec8fd05ea virtualrealitypop.com/reducing-cognitive-load-in-vr-d922ef8c6876 www.researchgate.net/publication/305027149_The_Impact_of_a_Self-Avatar_on_Cognitive_Load_in_Immersive_Virtual_Reality As part of our role as industry leaders and knowledge sharers, we spent some time each week trawling twitter, technology news sites and other sources for the latest in what's happening. For items of particular interest we will research further, engage in free trials etc. This week has been a life changing week for those of us working in the field of VR, for two reasons.

Firstly, Magic Leap has finally released the details of their new Mixed Reality goggles. This has been majorly anticipated but the release lengthened out so much that some people did begin to wonder if it was a scam. However the faith of investors like Google has paid off in the end, with Magic Leap releasing something so new it may take people a while to get their heads around it. Run by a computer the size of an old CD walkman, with goggles that look like a cross between a sunglasses advert and a Willy Wonker poster, it certainly does not look like the VR and AR we have become familiar with. But the magic is what is inside the technology - the mixed reality. Augmented reality is the ability to overlay something on top of the reality we see. Mixed Reality is when we can put 3d virtual objects in our real world and have them interact with, or more accurately, around it. The sensors on the magic leap headset, Lightwear combined with a controller, identify the objects in your space and use them as part of the environment for their software. In addition it recognises eye direction, head movements and gestures. Think about that for a second - robots that jump out of your ceiling, waterfalls that run off the edge of your table and butterflies that land on your couch. A virtual tv screen you can put on a real wall, and leave there still playing, while you look elsewhere; multiple computer screens in front of you with your work; your kids sitting at the table eating dinner while a virtual puppy sits at their feet. And all of it able to happen at the same time. Now move on to consider how this can be used for organisational training: fire drills or firefighter training in a real environment, with flames, smoke and things falling around you; information popping up on any machine you look at in a plant, that you can reach out and touch for more detail; experts being able to pop in and help problem solve; avatars that appear and give you a tour around a facility or museum; role play with virtual customers in a real world retail space or the inside of an airliner. The possibilities are endless. The SDK will be available in 2018 and developers like ourselves will be able to buy the headset, but it won't be easy. Imagine trying to plan a design with no prior knowledge of the space or furniture - it will certainly be a new challenge. But most of all, its different - right now you can create something once in Unity or a VR authoring tool and then publish to multiple headsets, particularly Vive and Oculus. But Magic Leap SDK is proprietary - it will only work with the Magic Leap Lightwear goggles. This may slow down its distribution but the advantages and opportunities may outweigh these negatives in the long run. Secondly this week Amazon Web Services has, out of the blue, released a VR authoring tool, Sumerian. They are still in the beta process and people have had to apply for the preview but I was lucky enough to get in early thanks to the helpful and responsive CEO Kyle Roche. I was given access after completing multiple tutorials in anticipation of the preview release and tweeting about them. With these helpful guides open on one web browser and Sumerian in the other, I was quickly able to develop my first VR space. I hit a few snags but they had kindly provided a slack channel where I could ask for help. While they are still perfecting it and ironing out the gliches, I would still already recommend this strongly, for ease of use, intuitive interface and ability to customise so many different actions. One note of advice - its easy to move from place to place in your scene, but moving to a totally different scene would require a new window to load, so consider carefully how you could handle your whole training in one 3D environment - that might mean you have to let go reality a little, and have reception that leads to a shop floor that leads to a distribution warehouse. As someone who uses software all the time and to quite a high level, I have thus far found VR frustrating. I'm a user not a coder - I can't work in Unity myself and I have to give that to someone else to do, which means I can't always make good suggestions on fixes or shortcuts. Now with Sumerian I can import my 3d scene (FBX, or OBJ formats), add obects, enable the learner to teleport around the room, give them the ability to grab or interact with objects, change things when they are touched etc, all without writing one line of code. I simply drag and drop pre written script "drag", "teleport" and assign it to a button on the controller. It also has triggers and conditions similar to Storyline but done in a different way - it takes a bit of getting your head around to switch to their way of thinking, but then it makes perfect sense. And that's where Amazon have really excelled - like Articulate, their learning tutorials are easy to read, step by step instructions plus they are ready to help o their Slack channel. I predict this will be the next wave of digital learning - Virtual Reality training done as easily and affordably as elearning is now, using VR authoring tools. Sumerian right now for true Virtual Reality, and CenarioVR from Trivantis next year for VR using 360 photos and video with xAPI and Lectora options to put in your LMS. It's the new wave and its starting now. The other day I spent the entire day playing VR. I mean researching. You know you are in a dream job where you can justify playing with VR all day as research for the cause of more comfortable design. And in that day I learned many tips which I want to share with you. Within the next 12 months we will see more and more experiences in VR and there will be more to learn and share. Sign up to our newsletter to hear more. Jacinta Penn

We have been developing in VR and AR for some time now but the next step is coupling this with xAPI (Experience API or formerly TinCan). In this post I want to talk a bit more about xAPI and how it can change the way you measure, report and adjust learning for your users - and not just for VR and AR but any learning you provide or learning that is done outside the traditional modes. What is xAPI?Rather than focusing on content and whether a learner has passed or failed a piece of structured learning (like SCORM), xAPI is about collecting what the learner does, their interactions, as they learn both formally and informally. Put simply it is learner focused, shifting the view from content to the learner by collecting data from multiple sources and environments. xAPI structure is actually quite simple - using basic statements presented as Actor-Verb-Object. So these statements might include something like 'Ray downloaded and completed safety checklist', 'Alison failed driving test' or 'Tamati used his mobile device to learn how to sell iPhone X' How can xAPI help me?Here are some ways xAPI can help you get more out of your learning:

xAPI and VRThis last option is particularly interesting for us at Workbright. Imagine collecting data on every response or interaction performed by a learner within a VR environment. This way we can track where people look, head movements, what they touch in VR and their response to simulated situations. For example, with our Fire Warden training we might collect data on what areas people visited, the time spent in these areas, what hazards were identified, how learners responded to opposition/refusals to leave and so on. This opens up so much more in terms of reporting, analysis of behaviours and tailoring future training to better suit learners needs. In the VR space, xAPI just makes sense; and coupled with the immersive experience of VR we can collect data that helps identify gaps in learning, shape future learning and have better follow up discussions on training. So what do I do next?We can help you with the key steps to get prepared for xAPI and you may be surprised to learn that your current LMS most likely can deal with xAPI already. If you want to learn more about xAPI, VR or both contact me for more information at [email protected].

|

AuthorSJacinta Penn and Archives

January 2023

Categories |

||||||

RSS Feed

RSS Feed